The Digital Wildfire: How Social Media Misinformation Is Becoming More Dangerous Than Terrorism

Vikram keshari Jena

In the twenty-first century, humanity has entered an era where information travels faster than armies and rumors can destabilize societies more rapidly than bombs. The rise of social media platforms has fundamentally transformed how people communicate, learn, and form opinions. Platforms such as Facebook, X (formerly Twitter), YouTube, Instagram, and WhatsApp connect billions of individuals across continents, creating a vast digital public sphere. Yet this extraordinary connectivity has also produced a darker reality: the unprecedented spread of misinformation and disinformation. Unlike traditional propaganda, which required state machinery and mass media networks, false narratives today can be created by anyone with a smartphone and an internet connection. Within minutes, misleading posts, manipulated images, or fabricated stories can reach millions of people across the globe. In this sense, misinformation in the digital world functions like a wildfire rapid, uncontrollable, and capable of causing widespread destruction. Increasingly, scholars, policymakers, and security experts argue that the damage caused by digital misinformation may rival, and in some cases exceed, the destructive potential of terrorism.

One of the reasons misinformation is so dangerous lies in its ability to manipulate perceptions of reality. Unlike terrorism, which uses physical violence to create fear, misinformation operates psychologically. It alters how people interpret events, understand political debates, and trust institutions. Research from the New York University Center for Social Media and Politics indicates that individuals with strong ideological views are more likely to encounter and believe misinformation online, making digital ecosystems fertile ground for polarization and manipulation.  This phenomenon creates what researchers call “information bubbles,” where users are repeatedly exposed to content that confirms their beliefs, regardless of its accuracy. In such an environment, false information spreads rapidly because people are more likely to share emotionally charged content without verifying its authenticity. Studies have also demonstrated that engagement signals, such as likes, shares, and comments can increase people’s vulnerability to misinformation, as highly shared posts appear more credible even when they are false. The result is a digital ecosystem where truth struggles to compete with viral deception.

Recent global events provide compelling evidence of how misinformation can destabilize societies. During international conflicts, digital propaganda has become a powerful weapon used by governments and non-state actors alike. For example, researchers analyzing social media activity during the Russia-Ukraine conflict discovered widespread circulation of propaganda and misleading content across platforms like Facebook and Twitter, with only a small portion of these posts removed by moderation systems. Such campaigns are not merely about shaping narratives; they can influence public opinion, diplomatic relations, and even military strategies. In modern information warfare, the battlefield is not limited to physical territory, it extends into the digital spaces where public perception is formed. In fact, analysts increasingly describe social media platforms as “extensions of the battlefield,” where misinformation becomes a strategic tool for geopolitical influence.

Another striking example emerged during the Gaza conflict, where dozens of false claims circulated online within hours of major events. According to monitoring organizations, at least fourteen viral false narratives related to the war received over twenty million views within just a few days across major platforms.  Such misinformation included fabricated videos, misidentified photographs, and manipulated images designed to inflame emotions and deepen political divisions. In some cases, artificial intelligence was used to create convincing yet entirely fake visual evidence. Experts warn that AI-generated misinformation is lowering the technical barriers for deception, allowing individuals or state actors to fabricate highly believable images and videos that can quickly go viral online.  When millions of people encounter such content before fact-checking organizations can respond, the false narrative often becomes embedded in public consciousness, shaping opinions long after it has been disproven.

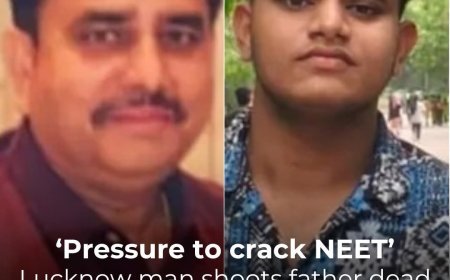

The consequences of misinformation are not limited to international politics; they frequently spill over into real-world violence and social unrest. A striking case study comes from the United Kingdom, where false information circulating on social media about a criminal incident led to large-scale protests and hostility toward immigrant communities. Parliamentary investigations found that algorithmic recommendation systems amplified misleading posts that falsely identified the suspect as a Muslim immigrant, generating millions of views and fueling public outrage.  In this case, misinformation did not merely distort the facts, it actively inflamed tensions and contributed to social division. Such incidents demonstrate how digital platforms, designed to maximize user engagement, can inadvertently promote sensational or misleading content because it attracts more attention than verified information.

Digital misinformation also poses serious threats to public health and democracy. During the COVID-19 pandemic, conspiracy theories about vaccines, medical treatments, and the origins of the virus spread rapidly across social media networks. False claims about miracle cures and anti-vaccine propaganda undermined public trust in scientific institutions and health authorities. Scholars have described this phenomenon as an “infodemic,” where the overwhelming volume of false information complicates efforts to manage real crises. The spread of misinformation during the pandemic illustrates a disturbing truth: misinformation does not merely distort opinions, it can directly endanger lives by influencing medical decisions and public behavior. In the long term, repeated exposure to false narratives erodes trust in science, journalism, and democratic institutions, creating a climate where facts themselves become contested.

Another dimension of the misinformation crisis is the harassment and intimidation of journalists, activists, and public figures. According to a global study involving participants from more than one hundred countries, over two-thirds of women journalists and activists have experienced digital abuse linked to online misinformation and harassment campaigns.  In many cases, these digital attacks escalate into real-world threats, including stalking, harassment, and violence. This pattern reveals how misinformation can be used as a tool of intimidation, silencing voices that challenge powerful interests. When journalists and activists are targeted through coordinated misinformation campaigns, the broader consequence is a weakening of democratic accountability and public discourse.

The scale of the problem is staggering. In recent years, research organizations and policy institutions have begun treating misinformation as a national security issue. Institutions such as the Network Contagion Research Institute study how harmful narratives spread across digital networks and how they can incite extremist behavior.  Their work suggests that online misinformation behaves similarly to contagious diseases, spreading through networks of users and amplifying emotional responses such as anger, fear, or resentment. Once such narratives gain momentum, they can mobilize communities, shape political movements, and even incite violence. In this sense, misinformation operates as a form of psychological warfare, an invisible yet powerful force capable of reshaping societies without firing a single bullet.

Despite these challenges, there are also emerging efforts to combat the spread of misinformation. Civil society movements such as the Swedish initiative #IamHere have demonstrated how digital communities can respond collectively to false narratives and hate speech.  By encouraging users to challenge misinformation and promote fact-based discussion, such initiatives represent a grassroots attempt to restore civility and accuracy in online conversations. At the same time, governments and technology companies are experimenting with regulatory frameworks, algorithmic transparency, and AI-based fact-checking systems. However, these efforts remain limited compared to the scale and speed of misinformation networks.

Ultimately, the struggle against misinformation is not merely a technological challenge but a cultural and intellectual one. The digital age has democratized information production, allowing billions of individuals to participate in global conversations. Yet this democratization has also blurred the boundaries between truth and falsehood. In an era where anyone can publish information instantly, critical thinking and media literacy become essential tools for democratic societies. Citizens must learn to question sources, verify information, and resist the emotional impulses that often drive the viral spread of misinformation.

The greatest danger of misinformation lies not only in the falsehoods themselves but in the erosion of trust they create. When people can no longer distinguish between truth and manipulation, the foundations of democratic governance begin to weaken. Elections become vulnerable to propaganda, public debates become polarized, and institutions lose legitimacy. Terrorism seeks to create fear through violence; misinformation achieves a similar outcome by destabilizing trust, dividing societies, and manipulating perceptions of reality.

In the digital age, therefore, the fight for truth has become one of the most important struggles of our time. Governments, technology companies, researchers, journalists, and citizens must collaborate to build a resilient information ecosystem that values accuracy, accountability, and critical thinking. Without such efforts, the digital world may become a landscape where misinformation spreads unchecked, undermining the very foundations of democracy and social cohesion.

If terrorism threatens bodies, misinformation threatens minds and in the long run, the latter may prove even more destructive.